Due Diligence Questionnaire (DDQ): 5 Templates + Scoring 2026

Co-founder at Peony — I built the data room platform, with a background in document security, file systems, and AI.

Connect with me on LinkedIn! I want to help you :)Due Diligence Questionnaire (DDQ): 5 Templates + Scoring 2026

By Deqian Jia, co-founder of Peony · Last updated May 11, 2026

I'm Deqian Jia, co-founder of Peony. I run a data room used by 4,300+ customers, which means I sit downstream of an absurd number of DDQs every quarter — LPs commissioning DDQs on emerging GPs, corporate buyers pushing 300-question lists at mid-market sellers, procurement teams attaching SIG Lites to their RFPs, and VC associates trying to compress a fund's preferred 60-question template into a 24-hour pre-Monday-IC scramble.

This post is the definitive guide to the DDQ as an artifact — the questionnaire itself, who issues it, what's in it, and how to score the responses. If you came looking for the seller-side file list, see the 174-document M&A DD checklist. If you came looking for the buyer-side analytical framework, see the 7-pillar investment DD checklist. This one is about the questions.

Quick answer. A due diligence questionnaire (DDQ) is the structured list of written questions an investor, buyer, or procurement team sends a counterparty before committing capital, signing a contract, or completing an acquisition. In 2026, the 5-Persona DDQ Template Library (LP-to-GP, buyer-to-seller M&A, vendor (SIG Lite + SOC 2), investor-to-startup VC, and acquirer-to-target post-LOI) covers ~95% of real deals. Score every response with the Green/Yellow/Red Response Band Rubric (ILPA DDQ 2.0, AIMA 2025 Illustrative Questionnaire, Shared Assessments SIG). Median DDQ response time runs ~0.8 days (cap table) to ~6.2 days (ESG/climate) per the 2026 DDQ Response-Time Benchmark. Watch for the eight DDQ traps — customer concentration above 30% and IP-assignment gaps re-price or kill the most deals.

The three proprietary frames I'll keep coming back to in this post:

- Frame A — the 5-Persona DDQ Template Library. Five archetypes covering ~95% of real DDQs.

- Frame B — the Green/Yellow/Red Response Band Rubric. A three-band score for every answer.

- Frame C — the 2026 DDQ Response-Time Benchmark. Median days to resolve by question category, inferred from anonymized data-room engagement patterns across our deal teams.

I use the 5-Persona DDQ Template Library, the Green/Yellow/Red Response Band Rubric, and the 2026 DDQ Response-Time Benchmark as the spine of every diligence process I help structure. The names are mine; the underlying mechanics are observable in any reasonably mature data room.

What is a due diligence questionnaire (DDQ) and who issues it?

A DDQ is the written, structured question artifact that initiates and documents a diligence process. The issuer is whoever is about to deploy money, sign a contract, or take operational risk: an LP about to commit to a fund, a buyer about to sign an LOI, a procurement team about to onboard a vendor, a VC partner about to lead a Series A, or an acquirer entering confirmatory diligence after the term sheet.

In every case the DDQ does four jobs at once:

- It anchors scope. The questions define what the diligence is actually about, which forces both sides to agree on what matters before the data starts flowing.

- It creates a record. Every question and every answer is logged. If the deal closes and something later turns out to be wrong, the DDQ is the chain-of-custody artifact regulators and litigators rely on.

- It paces the workflow. Phased DDQs unlock subsequent rounds of disclosure only when prior rounds are scored complete.

- It distributes accountability. Each question has an answer owner, a target response date, and a sign-off authority. Without that structure, "we're still gathering" expands to fill the entire timeline.

Three regulatory pillars make the DDQ effectively non-optional for institutional capital. The SEC fiduciary standard requires registered advisers to conduct reasonable investigation and document the basis for every recommendation. The ERISA prudent-investor rule requires pension fiduciaries to investigate with the care, skill, and diligence of a prudent expert. The common-law duty of care binds GPs to LPs regardless of registration status. In every case, "we did the work" must be defensible after the fact — and a completed, scored DDQ is the cleanest defense.

DDQ versus DD checklist. These two terms are routinely confused. The DDQ is the question artifact issued by the investor; the DD checklist is the document artifact assembled by the seller. The buyer's DDQ might ask, "What is your customer concentration as of the most recent quarter?" The seller's DD checklist then names the file that answers it — "Top 20 customer revenue schedule, last 8 quarters, audited." Both exist on every real deal; this post is about the question side.

Which 5 DDQ templates cover 95% of real deals? (Frame A)

The first thing I do with any deal team is map their situation to one of five archetypes. Real deals very rarely require an exotic seventh DDQ flavor; the 5-Persona DDQ Template Library is the working set.

| DDQ Archetype | Who issues | Who responds | Question count | Median response time | Typical bottleneck |

|---|---|---|---|---|---|

| LP-to-GP (fund commitment) | Limited partner (pension, endowment, FoF, family office, OCIO) | General partner / IR | 150-300 | 4-8 weeks | ESG/DEI module, fee disclosure, conflicts |

| Buyer-to-Seller (M&A) | Corporate buyer or PE sponsor | Target's CFO / advisors | 200-400 | 6-12 weeks | Customer concentration, IP chain, working capital |

| Vendor (procurement / TPRM) | Procurement, security, GRC | Vendor security + legal | 75-1,000 (SIG Lite to Core) | 2-6 weeks | SOC 2 evidence, sub-processor map, BCP |

| Investor-to-Startup (VC) | VC partner / associate | Founder + COO | 40-100 | 1-3 weeks | Cap table, IP assignment, ARR/NRR audit |

| Acquirer-to-Target (post-LOI) | Acquirer corp dev / advisors | Target executive team | 250-500 | 4-8 weeks | Confirmatory financials, employment offers, integration data |

Each archetype gets its own deep section below. The 5-Persona DDQ Template Library is the single most useful map I know for picking the right template, the right reviewer pool, and the right scoring discipline before a deal even starts. For sector-specific overlays see our M&A solution view, private equity workflow, venture capital workflow, and the broader due diligence solution page.

The persona that issues the DDQ also defines the answer authority: an LP-to-GP DDQ ultimately gets signed off by the GP's CCO and CFO; a Vendor DDQ by the vendor's CISO and legal; a VC DDQ by the founders themselves. Identifying the sign-off authority up front avoids the most common process failure — the responder's IR or legal lead drafting answers their CFO has not actually reviewed. Inside Peony, the per-persona permissions and NDA gate let each DDQ archetype see only the tier of disclosure its authority warrants, which is what makes the 5-Persona DDQ Template Library workable on a single data room.

What's in an LP-to-GP DDQ — and how is it different from ILPA's standard?

The LP-to-GP DDQ is the questionnaire a limited partner sends a general partner before committing to a private fund. In 2026 the dominant templates are:

- ILPA DDQ 2.0 (released 2021, updated periodically through the present), which the Institutional Limited Partners Association maintains. Industry adoption estimates put 60-70% of institutional LPs using it as their primary template. The DEI Monitoring Questionnaire (2023) and the PRI Climate Module (2025, with ILPA and iCI) are the two most consequential supplements.

- AIMA's 2025 Illustrative Questionnaire for the Due Diligence of Investment Managers, a modular template AIMA refreshed in March 2025 — its first significant update in eight years — adding a Private Markets strategy module and integrating cyber, cloud, AI, sustainability, and fund-director questions into the core. See AIMA's 2025 announcement for the module map.

Sections you'll see on almost every LP-to-GP DDQ:

- Firm and ownership. Legal entity, history, ownership structure, affiliations, regulatory registrations (SEC, FCA, FINRA, AIFMD).

- Investment strategy. Thesis, target sectors, stage, geography, deal sourcing, expected portfolio construction.

- Team. Key persons, biographies, succession, attribution of track record, time allocation, comp structure, GP commitment.

- Track record. Gross and net IRR/TVPI/DPI/MOIC by vintage, fund, and deal; loss ratios; realized vs. unrealized.

- Portfolio construction. Position sizing, concentration limits, follow-on policy, reserves.

- Terms and economics. Management fee, carry, hurdle, GP commitment, expense allocation, ILPA Principles alignment.

- Compliance and conflicts. Code of ethics, personal trading, gifts, allocation policy, related-party transactions.

- Valuation and reporting. Valuation methodology, frequency, third-party reviewer, audit firm, ILPA quarterly reporting standards.

- Operations and service providers. Administrator, custodian, audit, legal, cyber, business-continuity, insurance.

- ESG. PRI-aligned responsible investment DDQ section, plus the 2025 Climate Module supplement.

- DEI metrics. ILPA DEI Monitoring Questionnaire (2023 addition).

- Form ADV / Form PF cross-references. SEC-registered advisers attach their latest Form ADV and (where applicable) Form PF; the Form PF compliance date for the amended version is October 1, 2026, so 2026 DDQs increasingly ask GPs to confirm readiness for the amended schedule.

How a real LP-to-GP DDQ deviates from ILPA's standard. No sophisticated LP actually uses ILPA DDQ 2.0 unmodified. Public-pension LPs (e.g., CalPERS, which discloses its allocations on its website although it does not disclose underlying portfolio companies under California law) layer in jurisdiction-specific public-records and political-disclosure questions. University-endowment LPs layer in mission-alignment and exclusion-list questions. Funds-of-funds layer in cross-fund allocation policy. Family-office LPs layer in principal-alignment and values-fit questions. The ILPA DDQ is the floor; every meaningful LP adds 30-60 LP-specific supplemental questions on top.

The DDQ closes out when the LP's IC issues a final decision, but in practice the best LP DDQs evolve into the LP's ongoing monitoring framework — the same template re-issued annually, with deltas highlighted. That continuity is one reason emerging managers should treat their first DDQ response as the master version of their entire institutional narrative. If you're an emerging GP, our Fund I LP-tier stack guide covers the access architecture that survives a 23-LP first close.

What's in a Buyer-to-Seller DDQ for mid-market M&A?

The Buyer-to-Seller DDQ is the question list a corporate or PE buyer issues to a mid-market seller after initial interest but typically before the LOI is signed. It is the longest of the five archetypes — 200 to 400 questions is normal — and is structured to mirror the 10 standard DD categories on the seller-side data room.

The 10 categories you should expect:

- Corporate and governance. Cap table, articles, bylaws, board minutes, consents, subsidiary chart, foreign qualifications.

- Financial. Audited financials, monthly P&L, working-capital bridges, debt and debt-like items, projection methodology, QofE adjustments.

- Tax. Federal, state, and local returns; nexus exposure; sales tax; R&D credit support; transfer pricing; deferred tax positions.

- Legal and contracts. Material contracts, change-of-control provisions, MFN clauses, exclusivity, indemnities, IP assignments.

- Customers and revenue. Top customer concentration, ARR/MRR bridges (SaaS), churn cohorts, customer contracts, pricing.

- HR and employment. Employee list with comp, equity, classification (contractor vs. employee), benefit plans, severance, key-person agreements.

- IP and technology. Patent and trademark register, copyright assignments, open-source license inventory, tech stack architecture.

- Security and privacy. SOC 2 report, ISO certifications, penetration tests, DPIA, breach history, sub-processor list.

- Operations. Facilities, leases, capex schedule, suppliers, business-continuity plan.

- Regulatory and compliance. Licenses, permits, HSR-relevant filings, FCPA compliance, sanctions screening.

The 2026 HSR threshold is $133.9 million (size-of-transaction test for premerger notification), which materially affects DDQ scope: deals near or above that threshold add an HSR/regulatory section that asks for prior filings, second-request history, vertical-overlap mapping, and competitor-customer disclosures.

How the Buyer-to-Seller DDQ paces the deal — the 3-Tranche DDQ Pacing Model. Most experienced buyers issue the DDQ in three tranches:

- Tranche 1 (pre-LOI): corporate, top-line financial, top customer overview, IP register. ~80 questions. Two-week turnaround.

- Tranche 2 (post-LOI, exclusivity active): full financial detail, complete contract review, IP chain verification, HR data, sub-processor maps. ~200 questions. 4-6 week turnaround.

- Tranche 3 (confirmatory): updated financial through latest month, disclosure schedules, employee-by-employee data, integration prep. ~80-100 questions. 2-3 week turnaround.

Sellers who treat the entire DDQ as one undifferentiated 300-question list always blow timelines. Sellers who pace responses to match the tranche structure close 30-45 days faster on average — every advisor I work with confirms this from experience. For the full file-side companion to this question artifact see our 174-document M&A DD checklist, and for the underlying process flow the M&A DD process guide. Peony's per-folder permissions are the mechanism most M&A teams use to gate Tranche 2 documents behind LOI execution.

The Buyer-to-Seller DDQ is the artifact where the 8 DDQ traps (below) most often manifest, because the question density is highest and the buyer's underwriting depends on every Green band actually being green.

What's in a Vendor DDQ — and why SOC 2 attestation matters most?

The Vendor DDQ is the procurement questionnaire issued to a third-party supplier before contract signing or renewal. In 2026 the dominant baseline is the Shared Assessments Standardized Information Gathering (SIG) Lite, currently sized at approximately 126 questions across 21 control domains. Larger or higher-risk vendors get SIG Core, which scales to 1,000+ questions. The SIG questionnaire is the procurement-side industry standard; enterprise buyers in 2025-2026 increasingly pre-publish their completed SIG Lite in a trust center to short-circuit repeated inbound requests.

The 6 domains every Vendor DDQ has to cover (our procurement default):

- Business and financial stability. Audited financials, ownership, parent company, sanctions exposure, M&A activity.

- Information security. Identity and access management, encryption, network segmentation, vulnerability management, penetration test results.

- Privacy and regulatory compliance. Data-processing agreement, sub-processor list, DPIA, regional compliance (GDPR, CCPA, HIPAA where relevant).

- Operational resilience. Business-continuity plan, disaster-recovery RTO/RPO, change-management, incident-response procedures.

- Legal and contract risk. Indemnities, liability caps, insurance certificates, dispute history.

- Ethics and ESG. Code of conduct, anti-bribery, modern-slavery disclosure, supplier-diversity, climate.

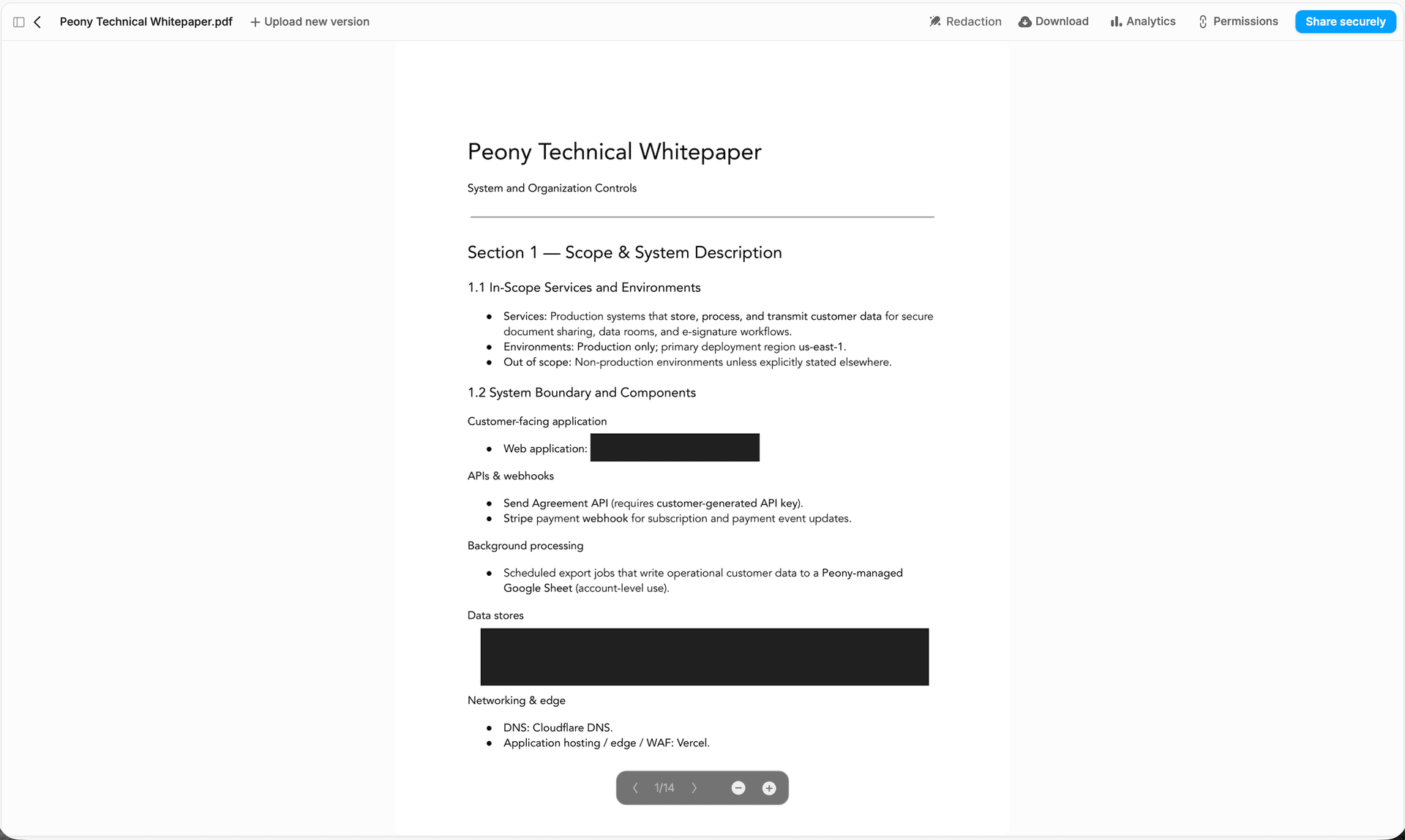

Why SOC 2 Type II is the single most weighted attachment. A SIG Lite describes controls. A SOC 2 Type II report attests they actually operate as described, by an independent CPA firm, over a 6-12 month observation window. The distinction matters because procurement teams in 2025-2026 are increasingly skeptical of self-reported security: every vendor checks "Yes" on "Do you encrypt data at rest?", but only the ones with a Type II audit have a third party verifying the control didn't break for six months running. Treat the SIG response as the narrative; treat the SOC 2 Type II as the proof. If the vendor only has a SOC 2 Type I (point-in-time, not operating), that's a Yellow band — request the Type II with a target close date.

The 2026 NIST CSF 2.0 effect. NIST elevated supply-chain risk management to its new Govern function, which means board-level accountability for vendor risk. In practice that has pushed Vendor DDQs into a continuous-monitoring posture: not a one-time questionnaire but an annual or semi-annual re-issuance with breach-alert triggers in between. Vendor DDQ as an annual artifact is now the default, not the exception. Procurement teams running rolling annual SIG re-issuance on Peony typically pre-publish their completed SIG Lite + SOC 2 Type II in a trust-center room with view expiry and per-viewer watermarks, so the same artifact serves inbound buyer questionnaires without manual re-send.

For the full file-side vendor framework see our Vendor DD checklist — this post handles the question artifact; that one handles the file inventory.

What's in an Investor-to-Startup DDQ for Series A/B?

The Investor-to-Startup DDQ is the questionnaire a VC partner issues a founder once the deal moves from intro to active diligence. It is the shortest of the five archetypes — 40 to 100 questions typically — because most VCs don't have the bandwidth (or the existing data) to run a 300-question Buyer-to-Seller-style process on a Series A target.

The 7 sections almost every VC DDQ covers:

- Founders and team. Backgrounds, prior companies, equity vesting status, time allocation, key-person agreements, advisor list.

- Company and corporate. Cap table (fully diluted, with options, SAFEs, notes), articles, board composition, prior financings.

- Product and tech. Architecture overview, codebase ownership, IP assignments (founders and contractors), open-source license inventory, security posture, AI/data usage.

- Market and competition. TAM/SAM/SOM, top-3 competitor map, win/loss data, sales channel architecture.

- Revenue and retention. ARR/MRR bridge, NRR cohort table, gross retention, CAC payback, LTV:CAC, customer concentration.

- Legal and compliance. Pending litigation, regulatory exposure, employment classification (contractor vs. W-2), benefit plans.

- Financials and projections. Last 24 months actuals, current burn, runway, next 24 months plan, scenario sensitivity.

2026 VC DDQ trends. Diligence that used to take a week now takes a month or two in the post-2021 funding climate. Per Carta's Q4 2025 State of Private Markets, the median Series A post-money valuation hit a record $78.7 million (up 37% year-over-year), which is one driver of the deeper diligence cycle: at higher entry valuations, partners have less margin for error. Two trends I see most consistently:

- Code-and-IP audits are now table stakes at Series A. A decade ago a code review was a Series B item. Today most institutional Series A leads either run a code audit themselves or insist the company commission one. The DDQ section on IP assignment chains and contractor work-for-hire is the most common Red-band source in VC diligence.

- NRR and cohort discipline. The Series A DDQ now asks for cohort retention at 6, 12, and 24 months, and increasingly asks for net dollar retention by ARR cohort. Founders who don't have the data warehouse built to produce this on demand routinely lose 1-2 weeks of process time to retroactive cohort construction.

For the broader Series A/B framework see our startup due diligence guide.

What's in an Acquirer-to-Target DDQ once the LOI is signed?

The Acquirer-to-Target DDQ is the post-LOI confirmatory questionnaire, issued by a corporate acquirer (or sometimes a PE sponsor in its final exclusivity window) once a term sheet exists. It overlaps heavily with the Buyer-to-Seller DDQ but adds two things: (1) confirmatory data through the most recent month, and (2) integration-readiness data the target wouldn't normally surface pre-LOI.

Integration-specific sections only this archetype contains:

- People retention. Stay bonuses, retention pool sizing, employment-offer planning, key-person interviews.

- IT integration. SSO architecture, identity-provider migration, application portfolio, license counts, contract notice periods.

- Customer notification. Change-of-control consent requirements per contract, sequencing plan, communications language.

- Vendor portfolio rationalization. Overlap with acquirer's vendor stack, duplicate spend, exit costs.

- Brand and product. Brand-architecture decisions, product-merger plan, pricing migration, MRR cannibalization risk.

- Day-1, Day-30, Day-90, Day-180 playbook. Workstream owners, dependencies, integration committee structure.

The Acquirer-to-Target DDQ is also the artifact where R&W (representations and warranties) insurance underwriters run their own confirmatory cycle. R&W underwriters effectively re-issue a subset of the DDQ to the target's advisors, looking for inconsistencies between management answers and contemporaneous documents. A Red band at this stage almost always triggers an underwriting carve-out, which raises the deductible and the premium.

The transition from pre-LOI buyer DDQ to post-LOI acquirer DDQ is the moment when staged disclosure and the data room's audit trail matter most: every claim made pre-LOI is now being re-checked against the documents. If page-level analytics show the buyer's IP counsel actually spent 47 minutes on the contractor-assignment register but only 4 minutes on the founder-assignment register, that's a real signal about where the next round of questions will land. For the broader operational diligence framework that wraps this confirmatory phase see our operational due diligence guide; for sponsor-side acquirers see private equity due diligence. On Peony, deal teams typically run the full pre-LOI + post-LOI cycle on the Business plan at $40/admin/month, with NDA-gated tiers per phase.

How do you score DDQ responses with the Green/Yellow/Red rubric? (Frame B)

Every response to every question in every DDQ gets a band. The Green/Yellow/Red Response Band Rubric is the second of three frames I use across every diligence I structure.

GREEN — complete and sourced.

- The answer is internally consistent with the rest of the data-room artifacts.

- The answer cites a specific document or system of record (file name, page number, date).

- A reasonable reviewer would not need a follow-up to act on it.

- Status: question closed.

YELLOW — partial or follow-up required.

- The answer is incomplete, the source is missing, or the response punts to a later phase.

- The reviewer needs a specific additional data pull, a confirmatory schedule, or a clarifying email.

- The question stays open with an owner and a target close date.

- Status: tracked in the open-items log; revisits weekly.

RED — missing, evasive, or contradictory.

- The answer is absent, materially incomplete, or contradicts another data-room artifact.

- The response carries deal-stopper risk if it stays unresolved.

- Escalated to the deal lead, IC, or LP committee depending on the archetype.

- Status: paused workstream until cleared.

Why three bands and not five. Five-band rubrics (introducing "approaching green" or "trending red" variants) sound more nuanced but in practice cause status meetings to drift into arguments about which intermediate band a question belongs in. Three bands force a decision: act on it now (Green), schedule a follow-up (Yellow), or escalate (Red). That decisiveness is what makes the rubric usable across 300-question DDQs without melting the workflow.

Banding is a team verb. Best practice is for two reviewers (typically deal lead + workstream owner) to independently band each response, then reconcile disagreements at the weekly status meeting. Disagreements between reviewers are often the most useful signal — they surface latent assumptions about what "complete" actually means for a given question.

Banding feeds the IC memo. When the diligence wraps, the IC memo's first page summarizes the count of Green/Yellow/Red bands by category. Investment committees that see "12 Reds in IP/Contracts" know exactly where to focus the discussion, instead of leafing through 80 pages of workstream summaries. The Green/Yellow/Red Response Band Rubric is what makes that page possible. For specialty diligence verticals where the rubric is especially load-bearing see IP due diligence and AI due diligence, where contractor-assignment and model-provenance questions are the most common Red-band sources.

The third use of the rubric: it survives the deal. Post-close, the IC memo's Green/Yellow/Red counts become part of the auditable record. If a regulator or LP later asks "what did you know at signing?" the answer is the banded DDQ, with timestamps. The Green/Yellow/Red Response Band Rubric is therefore not just a workflow tool but a compliance artifact in its own right.

What's the median DDQ response time by question type (2026 benchmark)? (Frame C)

The 2026 DDQ Response-Time Benchmark is the third of my three frames. Inferred from anonymized engagement patterns across deal teams using virtual data rooms in 2026 (relative ordering is consistent; absolute days vary by deal):

| Question type | Median response time | Typical reason for delay |

|---|---|---|

| Cap table / ownership | ~0.8 days | Single source, frequently maintained |

| Financial statements (audited) | ~1.2 days | Pre-assembled at audit |

| Customer concentration | ~2.1 days | Requires contract-level pull |

| HR / employment | ~2.8 days | Legal review for PII gating |

| Regulatory permits | ~3.4 days | Distributed across business units |

| IP schedules | ~4.7 days | Assignment chain verification, especially contractors |

| Litigation | ~5.1 days | Outside counsel review is gating |

| ESG / environmental | ~6.2 days | Data spread across systems and external advisors |

Why the ordering is stable across deals. Three factors compound:

- Source location. Cap table and audited financials live in one place. ESG data spans HR, ops, facilities, supply chain, and external sustainability consultants.

- Review gating. Cap table updates need an internal sign-off. Litigation responses need outside counsel approval. The number of gates determines the calendar minimum.

- Disclosure exposure. IP and ESG responses carry the longest tail of follow-up because they trigger schedule revisions to side letters or reps & warranties.

How to use the benchmark. Before launching a DDQ, sequence questions so the slowest categories start first. If you wait until week 4 to start ESG, the DDQ closes on week 10 minimum. If ESG starts on day 1 in parallel with cap table, total cycle compresses by 1-2 weeks for the same effort.

The corollary for responders. If you're answering a DDQ, building the slow-category answer artifacts before the DDQ arrives is the single highest-leverage thing you can do. Pre-assembling an IP schedule, an ESG narrative, and a litigation summary cuts the median response time on those categories by 60-70% in our deal teams. The 2026 DDQ Response-Time Benchmark is, in effect, a pre-flight checklist for responders.

The benchmark also matters for investor pacing. Pension LPs running a DDQ on a $200M Fund III tend to under-budget time for the ESG/Climate Module, then hit the IC date with five Yellow bands still open. The median ESG response time of ~6.2 days is observable in our deal data; it should be observable in your project plan, too. The 2026 DDQ Response-Time Benchmark is the simplest tool to keep that pacing honest.

Which 8 DDQ traps actually kill deals?

The eight DDQ traps that re-price or kill deals most often, in rough order of frequency, are: (1) customer-concentration cliff, (2) IP assignment chain breaks, (3) revenue-recognition disputes under ASC 606, (4) sales-tax nexus exposure, (5) working-capital target disputes, (6) key-person dependency, (7) cybersecurity gap on legacy systems, and (8) ESG/climate data gaps. Each one maps to a Red band in the Green/Yellow/Red Response Band Rubric and each is preventable if the issuer asks the right follow-up before the IC date:

1. The customer-concentration cliff. Below 10% top customer is clean; 10-20% is manageable; 20-30% triggers 10-20% valuation compression and earnout/holdback structures; above 30% causes many PE buyers and SBA lenders to pass. The trap is almost always under-disclosure on the initial DDQ, with the real number surfacing weeks later in commercial DD. (How concentration kills deals — Mineola Search Partners, June 2025.)

2. IP assignment chain breaks. Founder code that pre-dates incorporation, contractor work without work-for-hire, third-party open-source under copyleft licenses, joint developments without IP ownership clarity. The DDQ question is simple; the answer is structurally hard for most early-stage companies. Red band fix usually requires reps & warranties carve-outs or sometimes pre-close remediation.

3. Revenue-recognition disputes. ASC 606 (US GAAP) requires careful judgment on contract performance obligations, variable consideration, and contract modifications. Sellers who recognize revenue earlier than the buyer's accounting team would re-cut routinely lose 10-20% of normalized EBITDA in QofE adjustments.

4. Sales-tax nexus exposure. Post-Wayfair (2018), economic nexus extends sales-tax obligations to states where the seller has no physical presence. The DDQ asks "Where do you collect sales tax?" Sellers often answer "Where we are domiciled," and the buyer's tax DD turns up six states of unrecorded liability.

5. Working-capital target disputes. The DDQ usually asks for "normalized working capital" and the seller answers with a 12-month average. The buyer's QofE provider asks for a 24-month seasonal-adjusted average and arrives at a different number. The gap becomes a purchase-price adjustment at signing, often $1-3M on a $50M deal.

6. Key-person dependency. "What happens if Founder X leaves?" The DDQ question is universal. The answer is rarely satisfactory at sub-$50M deals, and the typical fix is a 24-36 month key-person earnout or escrow. Sellers under-prepare this answer at their cost.

7. Cybersecurity gap on legacy systems. DDQ asks about SOC 2 and incident response. Seller answers covering current systems and forgets the legacy on-prem environment that handles 8% of revenue. Buyer's cyber DD turns it up. The fix usually requires a pre-close remediation budget and a representation carve-out — and on the disclosure side, screenshot protection plus view expiry on legacy-system documentation gives the seller a defensible exfil-risk posture during the remediation window.

8. ESG and climate data gaps. Increasingly common as IFRS S2 adoption expands across more than 30 jurisdictions. The DDQ asks for Scope 1, 2, and 3 emissions; the seller has Scope 1 and 2 only. The fix is a baselining commitment with a 12-month timeline, which usually doesn't break the deal but does add cost to integration.

Each trap maps to a Red band in the Green/Yellow/Red Response Band Rubric. The pattern recognition matters: experienced deal leads see traps 1, 2, and 4 every quarter and bake remediation budgets in before the DDQ even goes out. Less experienced teams discover them via the DDQ and then renegotiate. Both outcomes are survivable; the difference is calendar time and price.

How does the DDQ flow into the data room and staged disclosure? (Peony spine)

A well-run DDQ and a well-run data room are two views of the same workflow. The DDQ defines the questions; the data room is where the answer documents live; staged disclosure controls who sees what at each phase.

The three-tier disclosure spine I recommend:

- Tier 1 — Preliminary disclosure. Company overview, anonymized top-10 customer list, redacted financials, corporate structure. Available behind NDA to all qualified parties. Use Peony's NDA gate so every viewer signs before any document loads.

- Tier 2 — Detailed disclosure. Full financials, complete customer schedules, material contracts (with redaction where appropriate), IP register, HR summary. Available to shortlisted bidders or LP-progress trajectory only. Use per-folder permissions to gate Tier 2 separately from Tier 1.

- Tier 3 — Confirmatory disclosure. Side letters, employee-by-employee data, sensitive customer contracts, disclosure schedules, anchor LP terms. Available to the final bidder or committed LP only. Use view expiry plus link management and per-LP watermarks so any leak is traceable.

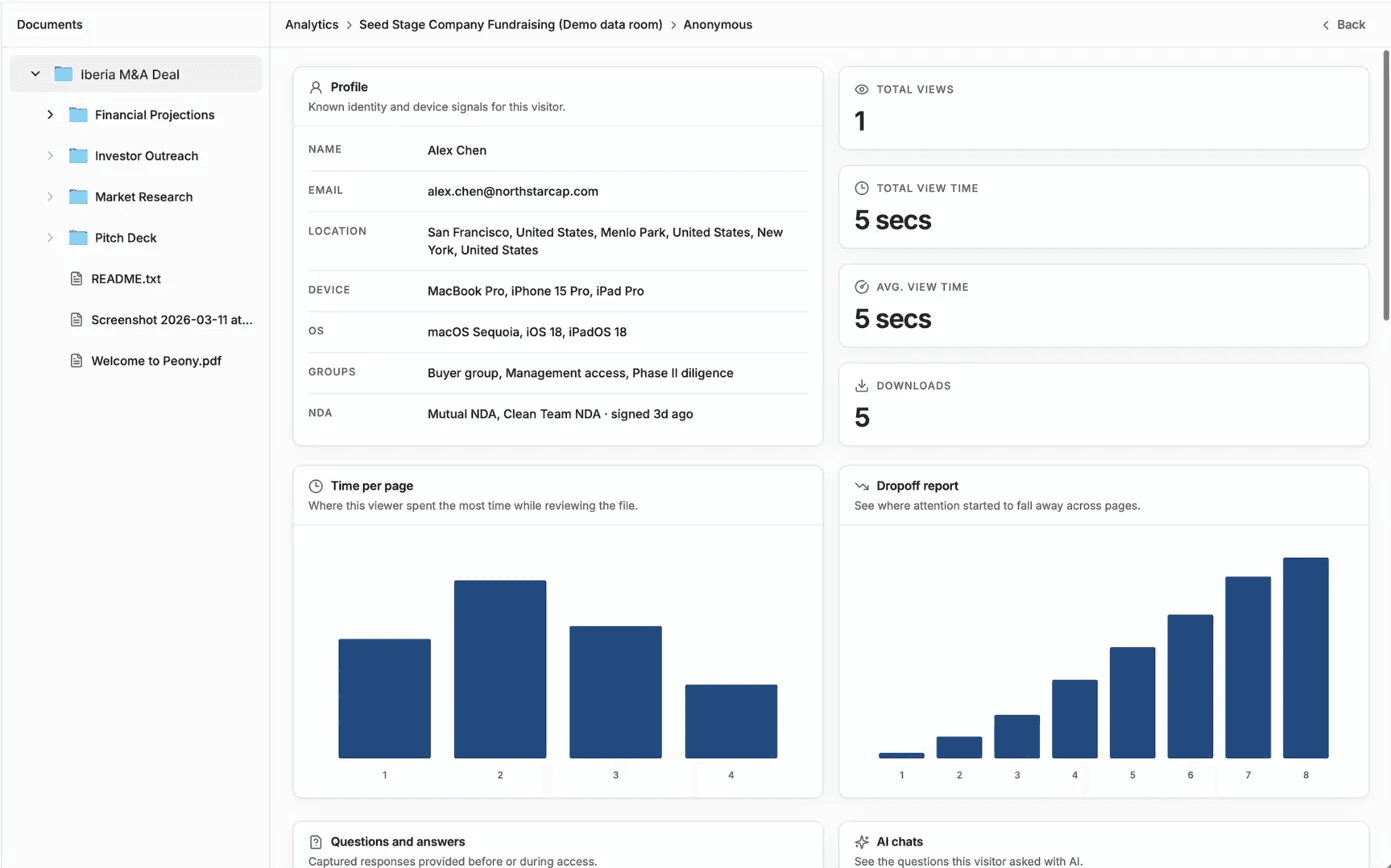

Why DDQ pacing requires page-level analytics. When 12 LPs are reviewing the same fund DDQ simultaneously, calendar-based follow-up is a guess. Engagement-based follow-up is signal. Peony's page-level analytics tell you which LP spent 14 minutes on the fund-econ section and which one bounced after 90 seconds — that's your follow-up priority list. Same logic applies to M&A: if the buyer's IP counsel spent 47 minutes on the contractor-assignment register, the next round of follow-ups is going to be about contractor IP. Anticipating that lets the seller pre-load the answer artifact.

Audit trail compliance. Every DDQ question and every banded response should produce a timestamped log entry. The audit trail satisfies three downstream needs: (1) R&W insurance underwriting; (2) regulator inquiries (SEC, FCA, state AG); (3) post-close LP/buyer audit. The data room's role is to make that trail automatic — every view, every download, every NDA signature, every Q&A exchange logged with timestamp and viewer identity baked in via dynamic watermark.

Where Peony fits. The full pre-LOI + post-LOI DDQ cycle runs on Peony's Business plan at $40/admin/month with page-level analytics, visitor groups for per-archetype access, NDA gates for staged disclosure, dynamic watermarks burned into every page, and smart Q&A for routing DDQ follow-ups to the right reviewer. Compare to alternative VDR pricing structures where DDQ-relevant features sit behind enterprise gates.

Related resources

- AI due diligence — DD-of-AI 5-Layer audit + EU AI Act exposure

- IP due diligence — 5-Asset Encumbrance Matrix

- Operational due diligence — 8-System audit + 3-Axis severity

- Private equity due diligence — 6-strategy playbook

- M&A due diligence process guide — hub

- 174-document DD data room checklist — file-side companion

- Investment due diligence checklist — buyer-side checklist

- Data room for emerging managers — Fund I LP-tier stack

- Top 10 virtual data room providers 2026 — VDR shortlist

Frequently asked questions

What is a due diligence questionnaire (DDQ)?

A due diligence questionnaire (DDQ) is a structured set of written questions an investor, buyer, procurement team, or regulator sends to a counterparty before committing capital, signing a contract, or completing an acquisition. It is the artifact that turns informal diligence into a documented record. Unlike a DD checklist — which lists the files the seller must produce — the DDQ lists the questions the issuer wants answered, often with citations back to source documents. In practice, every institutional deal in 2026 begins with one of five DDQ archetypes: LP-to-GP (fund commitment), buyer-to-seller (M&A), vendor (third-party risk), investor-to-startup (VC), or acquirer-to-target (post-LOI operational deep-dive).

Which 5 DDQ templates cover 95% of real deals?

If you're trying to figure out which template to start from, map your situation to one of five archetypes: (1) LP-to-GP DDQ, anchored on the ILPA DDQ 2.0 and the 2025 PRI Climate Module supplement, used by limited partners committing to private equity, venture, or credit funds. (2) Buyer-to-Seller DDQ, used in mid-market corporate M&A, typically 200 to 400 questions across financial, legal, IP, HR, and commercial categories. (3) Vendor DDQ, anchored on the Shared Assessments SIG Lite (~126 questions) and SOC 2 Type II attestation, used by procurement to onboard suppliers. (4) Investor-to-Startup DDQ, used by VC partners for Series A and B, focused on cap table, ARR/NRR mechanics, founder background, and IP assignment. (5) Acquirer-to-Target DDQ, issued after the LOI is signed, focused on confirmatory operational data and integration planning. Together they cover the vast majority of institutional deal flows.

How do you score DDQ responses with the Green/Yellow/Red rubric?

The Green/Yellow/Red rubric is a three-band response classification we use across every DDQ archetype. GREEN means the response is complete, internally consistent, and sourced to a specific document or system of record; the question is closed. YELLOW means the response is partial, ambiguous, or requires a follow-up data pull; the question stays open, owner and ETA assigned. RED means the response is missing, evasive, or contradicts another data-room artifact; the question is escalated to the deal lead or investment committee as a potential deal-stopper. Every question in every DDQ should get a band before the next phase begins. The rubric removes ambiguity from status meetings and creates an auditable chain for IC memos.

What's the median DDQ response time by question type in 2026?

Inferred from anonymized response-cycle patterns across deal teams using virtual data rooms in 2026: cap table and ownership questions resolve fastest (around 0.8 days), followed by financial-statement questions on audited periods (~1.2 days). Customer-concentration questions take longer because they require contract-level pulls (~2.1 days). HR and employment questions need legal review (~2.8 days). Regulatory permits and licenses can stretch into multi-day pulls (~3.4 days). IP schedules with contractor assignment chains average ~4.7 days. Litigation disclosures are slower still (~5.1 days) because counsel review is gating. ESG and environmental questions are the slowest category (~6.2 days) because data is often spread across systems and external advisors. Exact figures vary by deal but the relative ordering is consistent across the deals we observe.

What's the difference between a DDQ and a DD checklist?

A DDQ is the question artifact issued by the investor or buyer; a DD checklist is the document artifact assembled by the seller. The DDQ asks "What is your customer concentration as of the most recent quarter?"; the DD checklist names the file the seller must upload to answer it (e.g., "Top 20 customer revenue schedule, last 8 quarters"). Real deals use both: the buyer issues a DDQ, the seller's checklist is the inventory of files that will be uploaded to the data room to satisfy each question. For the file-side artifact see our 174-document M&A DD checklist; this post is about the question-side artifact.

What's in an LP-to-GP DDQ?

An LP-to-GP DDQ is the questionnaire a limited partner sends a general partner before committing to a private fund. The ILPA DDQ 2.0 (released 2021, now used by roughly 60-70% of institutional LPs per industry adoption estimates) and the AIMA 2025 Illustrative Questionnaire are the two dominant templates. Sections include firm overview, investment strategy and process, team and key persons, track record, portfolio construction, fees and economics, fund terms (including ILPA Principles alignment), compliance and conflicts, valuation and reporting, operations and service providers, ESG (PRI-aligned), and DEI metrics. The 2025 PRI Climate Module supplement adds climate-related questions in collaboration with ILPA and iCI. Most LP-to-GP DDQs run 150 to 300 questions and are issued before the LP signs an LPA.

What's in a Vendor DDQ and why does SOC 2 matter most?

If you're a procurement, security, or GRC lead about to onboard a third-party vendor, the Vendor DDQ is the questionnaire you issue before contract signing or renewal. The Shared Assessments SIG (Standardized Information Gathering) Lite is the dominant baseline at approximately 126 questions, covering 21 control domains from access management to incident response. Larger vendors get the SIG Core (~1,000+ questions). SOC 2 Type II attestation is the single most weighted document attached to a Vendor DDQ response: it is an independent CPA-firm audit that verifies the vendor's security, availability, processing-integrity, confidentiality, and privacy controls over a 6-12 month period. A SIG response describes the controls; a SOC 2 Type II report attests they actually operate as described. Enterprise procurement teams in 2025-2026 increasingly treat SIG Lite as the entry filter and SOC 2 Type II as the substantive proof.

What is the DDQ trap that most often kills mid-market M&A deals?

If you're a seller-side CFO or advisor staring down a 300-question buyer DDQ, the single most common trap that kills mid-market M&A is the customer-concentration cliff: when your DDQ response shows a top customer above 30% of revenue, deal failure rates spike and valuation compression of 15-30% becomes standard, especially among PE buyers and SBA lenders. Below 10% per customer is viewed as clean; 10-20% is manageable with diligence questions; 20-30% triggers earnouts or holdbacks; above 30% causes many buyers to walk. The trap is rarely the concentration itself — it's that sellers under-disclose it on the initial DDQ, the buyer finds it in commercial DD weeks later, and the deal either re-prices sharply or breaks. Other recurring traps include IP assignment gaps for contractor-built code, undisclosed sales-tax nexus, and revenue-recognition disputes under ASC 606.

Do you need a separate DDQ for ESG and climate risk?

In 2026 most institutional LPs no longer issue a separate ESG DDQ — they integrate ESG questions into the main fund DDQ using the PRI Limited Partners' Responsible Investment DDQ as a module. The 2025 PRI Climate Module supplement, published in collaboration with ILPA and iCI, provides a focused set of climate-related questions for LPs evaluating how managers govern climate risks under TCFD-aligned frameworks. As of 2026, IFRS S1 and S2 (which integrate and build on the TCFD recommendations and incorporate SASB industry-based disclosure metrics) are being adopted across more than 30 jurisdictions, which is shifting climate DDQ questions from voluntary best-practice to mandatory regulatory baseline.

How does the DDQ flow into the data room and staged disclosure?

A well-run DDQ and a well-run data room are two views of the same workflow. The DDQ defines the questions; the data room is where the answer documents live; staged disclosure controls who sees what at each phase. Best practice: in Phase 1, the issuer sends the DDQ and the responder uploads Tier 1 documents (overview, high-level financials, redacted contracts) to a preliminary data room. In Phase 2, after NDA execution and qualification, Tier 2 documents (full financials, customer schedules, IP registers, audited reports) unlock alongside DDQ follow-ups. In Phase 3, after term sheet or LOI, Tier 3 documents (sensitive schedules, employee-level data, side letters) open to the final counterparty only. Peony page-level analytics show the issuer which documents each reviewer actually opened, letting deal leads pace the DDQ follow-up cycle on real engagement signal rather than calendar guesswork.